If you understand how these things relate to software development work, then you require no training or coaching.

Self-discipline

Handling stress and working with others requires self-awareness and emotional control. All the skills listed here also require self-awareness and emotional control.

Learning How to Learn

It is widely agreed that software development teams are either improving mindfully, or they are deteriorating in their effectiveness. There is no steady state. To make improvement possible, team members must be open to learning new things and willing to question things they already know.

Focus

To achieve anything, you must focus on it. That means uninterrupted time to think and work. It means finishing one thing before starting another. It means not getting distracted. It means keeping your eyes on the goal and not on dwelling on the difficulties.

Persistence

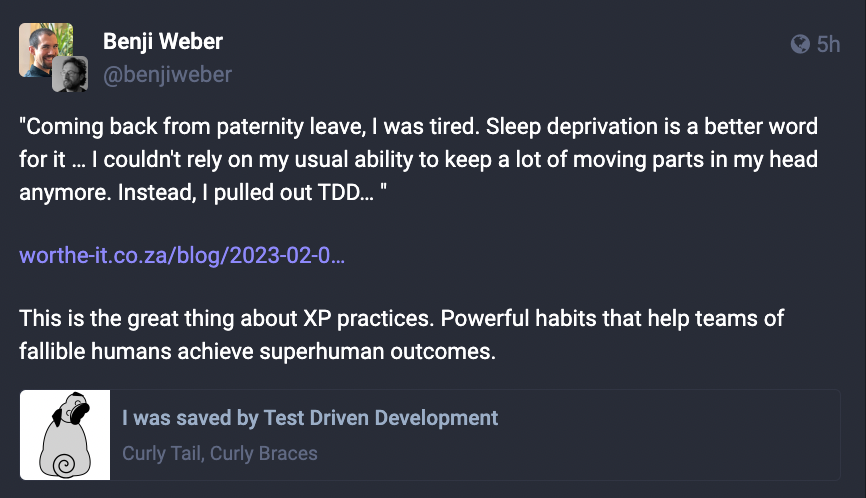

Overcoming challenges and meeting objectives requires persistence. Embracing change to improve our work requires persistence.

Collaboration

In almost all cases, software development work benefits from collaboration between two or more people. “The more you share, the more your bowl will be plentiful.” – James S.A. Corey, The Expanse.

Trust

Effective collaboration requires trust and the courage to be seen as vulnerable or imperfect.

Leadership

Leadership doesn’t mean giving orders. It means giving credit, giving time, giving space, giving encouragement, giving opportunity, giving trust. The more you give, the more you get.

Rhythm

A steady, predictable, consistent, and sustainable pace of work helps ensure continuous flow, maximize delivery effectiveness, and minimize team stress.

Technical Skills

The field of software is constantly changing. Software architecture, design patterns, programming paradigms, methods and schools of testing, of analysis, and other areas, design principles, development practices, automation, operations, and everything else is a moving target. To get started in this field, you need basic education/training in analysis, programming, and testing, and the non-technical skills listed above so that you will have the ability to keep yourself current with technical advances and to collaborate with and learn from your colleagues.

Without the basic training, you will have no foundation to build on. It would be like trying to run a foot race on top of loose ice floes on water. The general discussion of what skills are necessary tends to emphasize the non-technical side of things; don’t take that to mean there are short-cuts on the technical side. The reason for the emphasis is that the non-technical skills have been underappreciated in the past. On the other hand, don’t worry if you feel as if there’s too much to learn. Once you have the basics, you can build on that knowledge little by little.