Recently, I watched a webinar that demonstrates a work flow for asynchronous code reviews using a tool from JetBrains called Upsource. The webinar started me thinking about the constraints that might cause a team to resort to asynchronous code reviews in the first place. While the product appears to be well made and easy to use, I wonder whether it offers a workaround to deeper problems that teams might wish to consider solving outright.

Gaining confidence in the code

Software development teams use various techniques to gain confidence that their code is functionally correct, meets non-functional requirements, and is maintainable or, as people like to say these days, habitable. These include making effective use of language type systems; understanding and applying generally-accepted software design principles; using robust approaches to design such as domain driven design or design by contract; balancing up-front and emergent software design; using reference models and architectures and appropriate frameworks to minimize the reinvention of wheels; clarifying needs through methods like specification by example; vetting architectural alternatives through prototyping or set-based concurrent engineering; maintaining a suite of automated functional checks at multiple levels of abstraction; driving development from executable specifications of one form or another; the use of static code analysis tools; and more.

One of the most effective ways to gain confidence in the code is to have qualified colleagues review it. The value of code reviews may be one of the few topics in the software development field that doesn’t generate endless circular debate. As far as I know, it’s almost universally understood to be a useful practice.

The need to shorten lead times

There’s been a trend in the past several years to shorten lead times for software delivery. Many organizations are striving to achieve continuous delivery, and some are already doing it. At the same time, software professionals have embraced lean thinking, a way of improving processes with an eye toward maximizing customer-defined value and minimizing waste.

Team work practices and code review techniques

Keeping in mind both the value of code reviews and the need to shorten delivery lead times, it seems natural to want to review code in a way that minimizes the amount of time lost to the review process. Code review can be regarded as an example of Type 1 muda (unavoidable non-value-add activity) as defined by Womack and Jones. It is waste in the sense that customers aren’t directly purchasing a code review, so they don’t care about it. It is necessary in the sense that it helps us gain confidence in the quality of the product we ship. When we have a necessary waste, we want to minimize its impact on lead time.

Some team work practices cause code reviews to incur more process overhead than others. The key factor is the level of collaboration among team members. Direct, whole-team collaboration, popularized by Woody Zuill as mob programming, provides the highest degree of collaboration. Pair programming, which became well-known as part of Extreme Programming, also provides a very high level of collaboration. With these methods, code review occurs continuously and naturally throughout the development process. Development and review are the same activity. Therefore, these methods incur the minimum possible amount of process overhead to support code reviews.

With that in mind, one would expect every development team to be keen to adopt such practices. But in reality, a number of organizational constraints may be present that inhibit a team’s ability to work in these ways. When that is the case, we want to adopt the practices that incur the lowest process overhead that is feasible in our particular situation. Let’s consider some of those constraints.

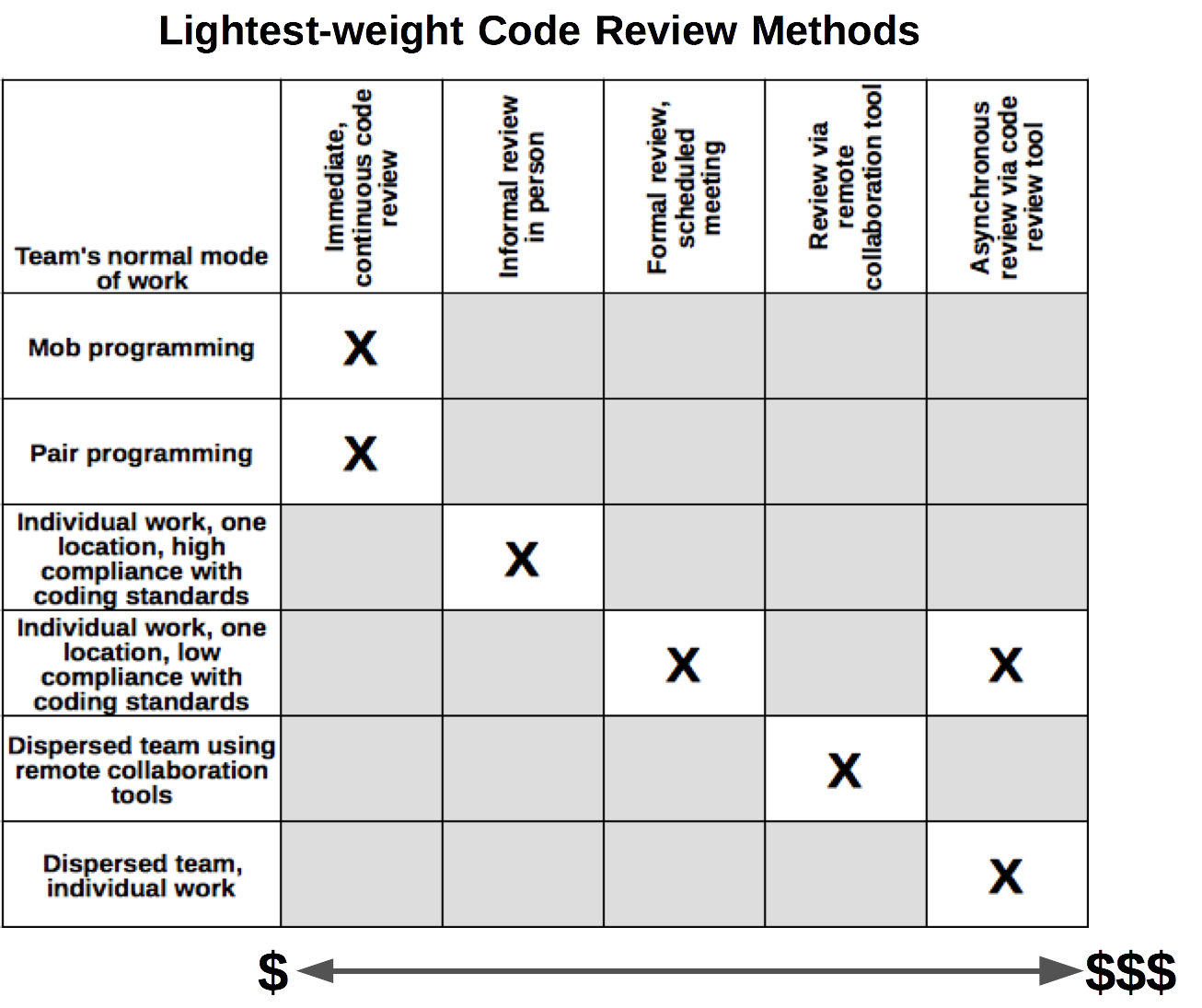

Figure 1 suggests a relationship between the level of collaboration on a team and the ways in which it is possible to conduct code reviews.

At the top of the chart, mob programming and pair programming provide continuous code review while the work is being done. There is no additional process overhead to allow for code review, as such review is part and parcel of development work and is not a separate activity.

The next level comprises teams that are collocated but do not practice mob programming or pair programming; at least, not most of the time. Because team members are collocated, it is relatively easy for them to find a peer to review their code when necessary. This reduces the need to schedule formal meetings to carry out reviews.

We also assume the team has clearly documented coding standards, that the team members are competent to understand and apply those standards, and that they have the self-discipline to do so. Those factors help to minimize the amount of time needed for code reviews.

Suboptimal situations

The first three rows on the chart depict more-or-less ideal, or close-to-ideal situations. Moving down the chart, we enter the realm where most software development teams live; what they call, for lack of a broader frame of reference, The Real World. This is where the team’s normal work practices introduce additional process overhead to support code reviews. That overhead may come into conflict with organizational goals to shorten lead times.

Row 4

The fact team members are collocated does not mean they automatically work in a collaborative way. The fourth row represents a team that works in the same location (same building or campus – maybe even the same team room) but whose members carry out their tasks individually. They only show their work when they are ready for a formal review.

What constraints might prevent a team from moving from row 4 to row 3? I’ve seen the following situations. You may have seen still more examples.

- No documented standards. Team members might be willing and able to conform to documented standards and guidelines for architecture, design, code, and non-functionals…if only they could find any such documentation. If standards exist, they aren’t well advertised. Lacking shared standards, people review code on the basis of whatever they happen to notice in the moment, based on their own individual preferences.

- Single (or few) senior team members. Many teams comprise one or two senior members and several junior members. Sometimes this is intentional; for instance, the team may be organized on the chief programmer model. More often, though, it is a result of the false economy of hiring the cheapest people one can find. Whether on a de facto basis or by virtue of formal role definition, one person makes all the architectural and design decisions. That person has to perform all the code reviews, too. (Bottleneck…delay…cost.)

- Bolshoi Ballet pattern. In the Bolshoi Ballet, every dancer is a Prima Ballerina, even if only in her own mind. The same is true on some software development teams. Team members prefer not to follow any standards they didn’t create themselves. To compensate, there has to be a designated architect or technical lead to perform code reviews and wrangle everyone into the same choreography.

Constraints like those are not easily removed. They are matters of management style and organizational culture. For teams in row 4, we want to find effective ways to conduct formal code review events, and effective ways to follow up on the decisions made in the reviews.

Given that team members work separately from one another and complete their tasks individually, there are two practical ways to conduct code reviews. One is to schedule a formal meeting to conduct the review. The other is to use a software tool that supports asynchronous review. This is a space in which tools like Upsource play.

Row 5

The last two rows in the chart represent dispersed teams. For the narrow purpose of code reviews, collaboration is a far more significant factor than collocation. A dispersed team that works collaboratively, using remote collaboration tools to interact directly throughout the work day, can handle code review without much more process overhead than a collaborative, collocated team.

Row 6

The bottom of the chart represents a dispersed, non-collaborative team. Teams that work in this manner have little choice but to conduct code reviews asynchronously and remotely. They need a tool like Upsource to support this.

Impact on lead time

Now let’s consider the effect on lead time of these various approaches to code reviews.

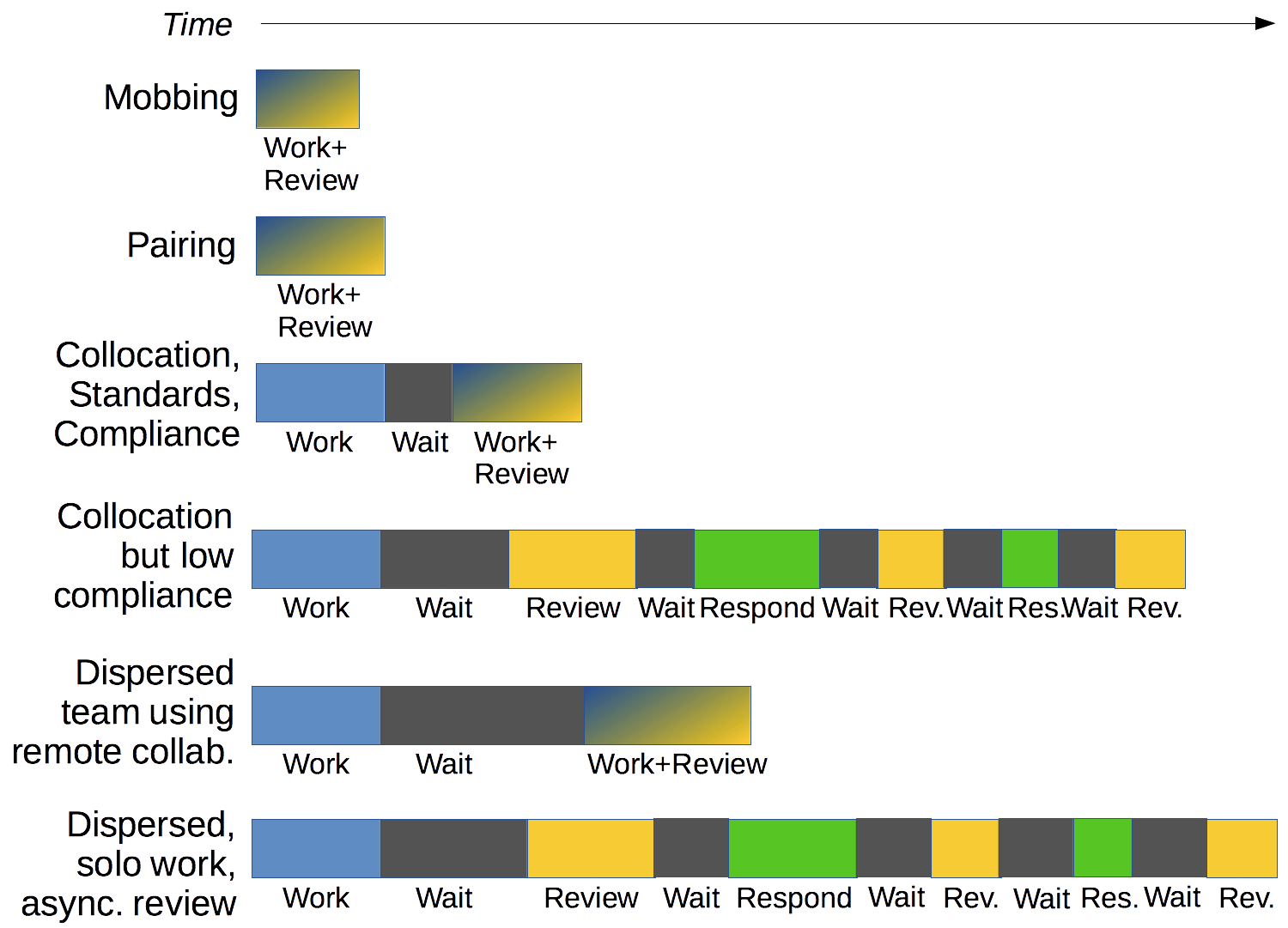

Figure 2 suggests a relationship between the level of collaboration on a team and the impact of code reviews on process overhead. The higher the level of collaboration, the less overhead is incurred by code reviews. The illustration does not depict absolute timings; it’s meant to show how the different methods of review can affect lead times.

Rows 1 and 2

The smallest impact on lead time occurs when code review is baked into the development process. This is the case with mob programming and pair programming. The diagram shows a slightly shorter lead time for mob programming than for pair programming because all the brains on the team are engaged at all times, so there is usually no delay at all in getting questions answered, and all perspectives and voices are available to comment on “review” considerations.

Rows 3 and 5

Members of a row 3 team work individually, but they are trusted to follow agreed-upon coding standards and they are collocated, so it’s easy for team members to work together on an ad hoc basis whenever they feel the need. There may be a delay from the moment a programmer is ready for a review and the moment someone is available to review the code. Once the two get together, they work through any issues immediately, so there are no further delays in the process.

Rows 4 and 6

These teams may be characterized by one or more of:

- Lack of any documented coding standards

- Lack of (a) skill or (b) will or (c) self-discipline in following established coding standards

- Low trust in team members to deliver clean code without formal review by a senior member

- Low level of collaboration; need for greater formality in the process

I suggest that this is the realm where tools like Upsource can add value. Yet, this is also the realm where code reviews have the greatest negative impact on lead time. The solution is not to add a tool that makes it slightly easier to live with the asynchronous, non-collaborative nature of code reviews; that is a workaround that enables the status quo to remain unchanged. The solution is much harder…and may not be feasible in all cases.